Freshly Printed - allow 8 days lead

Couldn't load pickup availability

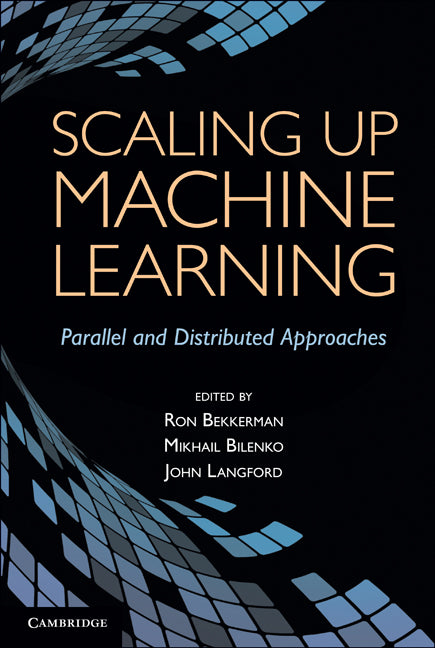

Scaling up Machine Learning

Parallel and Distributed Approaches

This integrated collection covers a range of parallelization platforms, concurrent programming frameworks and machine learning settings, with case studies.

Ron Bekkerman (Edited by), Mikhail Bilenko (Edited by), John Langford (Edited by)

9780521192248, Cambridge University Press

Hardback, published 30 December 2011

492 pages, 144 b/w illus.

25.9 x 18.5 x 3.3 cm, 1 kg

'This unique, timely book provides a 360 degrees view and understanding of both conceptual and practical issues that arise when implementing leading machine learning algorithms on a wide range of parallel and high-performance computing platforms. It will serve as an indispensable handbook for the practitioner of large-scale data analytics and a guide to dealing with BIG data and making sound choices for efficient applying learning algorithms to them. It can also serve as the basis for an attractive graduate course on parallel/distributed machine learning and data mining.' Joydeep Ghosh, University of Texas

This book presents an integrated collection of representative approaches for scaling up machine learning and data mining methods on parallel and distributed computing platforms. Demand for parallelizing learning algorithms is highly task-specific: in some settings it is driven by the enormous dataset sizes, in others by model complexity or by real-time performance requirements. Making task-appropriate algorithm and platform choices for large-scale machine learning requires understanding the benefits, trade-offs and constraints of the available options. Solutions presented in the book cover a range of parallelization platforms from FPGAs and GPUs to multi-core systems and commodity clusters, concurrent programming frameworks including CUDA, MPI, MapReduce and DryadLINQ, and learning settings (supervised, unsupervised, semi-supervised and online learning). Extensive coverage of parallelization of boosted trees, SVMs, spectral clustering, belief propagation and other popular learning algorithms, and deep dives into several applications, make the book equally useful for researchers, students and practitioners.

1. Scaling up machine learning: introduction Ron Bekkerman, Mikhail Bilenko and John Langford

Part I. Frameworks for Scaling Up Machine Learning: 2. Mapreduce and its application to massively parallel learning of decision tree ensembles Biswanath Panda, Joshua S. Herbach, Sugato Basu and Roberto J. Bayardo

3. Large-scale machine learning using DryadLINQ Mihai Budiu, Dennis Fetterly, Michael Isard, Frank McSherry and Yuan Yu

4. IBM parallel machine learning toolbox Edwin Pednault, Elad Yom-Tov and Amol Ghoting

5. Uniformly fine-grained data parallel computing for machine learning algorithms Meichun Hsu, Ren Wu and Bin Zhang

Part II. Supervised and Unsupervised Learning Algorithms: 6. PSVM: parallel support vector machines with incomplete Cholesky Factorization Edward Chang, Hongjie Bai, Kaihua Zhu, Hao Wang, Jian Li and Zhihuan Qiu

7. Massive SVM parallelization using hardware accelerators Igor Durdanovic, Eric Cosatto, Hans Peter Graf, Srihari Cadambi, Venkata Jakkula, Srimat Chakradhar and Abhinandan Majumdar

8. Large-scale learning to rank using boosted decision trees Krysta M. Svore and Christopher J. C. Burges

9. The transform regression algorithm Ramesh Natarajan and Edwin Pednault

10. Parallel belief propagation in factor graphs Joseph Gonzalez, Yucheng Low and Carlos Guestrin

11. Distributed Gibbs sampling for latent variable models Arthur Asuncion, Padhraic Smyth, Max Welling, David Newman, Ian Porteous and Scott Triglia

12. Large-scale spectral clustering with Mapreduce and MPI Wen-Yen Chen, Yangqiu Song, Hongjie Bai, Chih-Jen Lin and Edward Y. Chang

13. Parallelizing information-theoretic clustering methods Ron Bekkerman and Martin Scholz

Part III. Alternative Learning Settings: 14. Parallel online learning Daniel Hsu, Nikos Karampatziakis, John Langford and Alex J. Smola

15. Parallel graph-based semi-supervised learning Jeff Bilmes and Amarnag Subramanya

16. Distributed transfer learning via cooperative matrix factorization Evan Xiang, Nathan Liu and Qiang Yang

17. Parallel large-scale feature selection Jeremy Kubica, Sameer Singh and Daria Sorokina

Part IV. Applications: 18. Large-scale learning for vision with GPUS Adam Coates, Rajat Raina and Andrew Y. Ng

19. Large-scale FPGA-based convolutional networks Clement Farabet, Yann LeCun, Koray Kavukcuoglu, Berin Martini, Polina Akselrod, Selcuk Talay and Eugenio Culurciello

20. Mining tree structured data on multicore systems Shirish Tatikonda and Srinivasan Parthasarathy

21. Scalable parallelization of automatic speech recognition Jike Chong, Ekaterina Gonina, Kisun You and Kurt Keutzer.

Subject Areas: Pattern recognition [UYQP], Machine learning [UYQM], Computing & information technology [U]